TL;DR

If shoppers do not trust the Smart Cart to provide a consistently accurate, reliable, and convenient experience, adoption will stall, and Kroger may miss a key opportunity for tech-driven growth. This research identifies the necessary changes to transition from a pilot phase to essential, repeated usage.

The Problem

Caper’s Smart Cart was piloted in a Kroger-owned grocery store to demonstrate adoption and reliability before broader rollout. While the value proposition included faster checkout, autonomous shopping, and real-time spending visibility, in-store usage revealed inconsistent adoption and a significant rate of incomplete or disrupted shopping sessions. Without shopper trust or reliable trip completion, the pilot risked stalling, and commercial expansion would be challenging.

What I Did

I spent four weeks on-site during the live pilot, conducting rapid, mixed-method field diagnostics. This included real-time observation across the entire shopping journey, semi-moderated intercept interviews, and weekly tracking of incomplete or disrupted sessions. I improved the structured intercept survey by correcting question bias, segmenting first-time and returning users, balancing prompts, and adding follow-up questions. All findings were translated into a severity-ranked, cross-functional triage, distinguishing UX, engineering, operations, and hardware constraints.

Outcomes

Observed that approximately one in five sessions were incomplete or disrupted during the pilot, indicating that reliability and exception handling significantly affected completion rates.

Identified the most significant adoption blockers, particularly trust issues such as produce and discount accuracy, false triggers, and high-cost exceptions, including staff dependency and recovery processes.

Enhanced the quality of structured intercept data by reducing positivity bias and converting ratings into actionable diagnostic insights.

Developed a severity and ownership triage framework to align Engineering, UX, and Retail Operations on immediate priorities versus longer-term changes.

Core Insight: When trust is compromised, adoption declines more rapidly than convenience improvements can restore it.

If shoppers lose trust in the cart's accuracy or reliability, adoption rates decline significantly, regardless of convenience benefits. A single doubt about price, weight, or incorrect flagging can negate all convenience gains and reduce repeat usage or completed sessions, as observed during the pilot. This hypothesis is now testable: improving trust and exception recovery should increase completion and repeat intent. In a retail pilot, reliability is essential for scalable growth.

Overview

Caper piloted its Smart Cart in a live grocery store to demonstrate adoption and reliability for retail partners such as Kroger. Despite promises of faster checkout and real-time spend visibility, usage was inconsistent, and a significant portion of shopping sessions were not completed.

During four weeks on-site, I conducted field research to identify adoption barriers and translate findings into an actionable triage plan for Engineering, UX, Operations, and Go-to-Market teams.

Key signal: I observed a 1-in-5 rate of incomplete/disrupted transactions during the pilot window.

Business stakes

Retain and expand the Kroger relationship

Increase completed Smart Cart transactions

Demonstrate reliability at scale to support expansion

Reduce high-severity friction signals that deter repeat use

My role (Embedded Field Research)

Over a concentrated 4-week period, I:

Observed live shopping behavior end-to-end (scan, bag, produce, checkout)

Ran semi-moderated intercept interviews immediately post-use

Logged breakdowns and exception handling patterns

Tracked incomplete/disrupted transactions weekly

Flagged and corrected response-bias risks in a structured intercept instrument

Communicated high-severity issues through Slack + support tickets to support triage

Methods

1. Ethnographic observation

Observed shoppers in real time as they scanned, bagged, weighed produce, paid, and handled exceptions. This approach enabled rapid identification of journey-level breakdowns and the surfacing of adoption blockers during active trips.

2. Semi-moderated intercept interviews

Captured immediate reactions to friction, assessed troubleshooting tolerance, and measured intent to reuse. This method provided actionable context on abandonment and identified when business risk was highest for drop-off or negative feedback.

3. Observed incomplete/disrupted transactions

Week | Incomplete transactions | Total observed | Incomplete rate |

|---|---|---|---|

Week 1 | 18 | 76 | 23.7% |

Week 2 | 16 | 82 | 19.5% |

Week 3 | 13 | 72 | 18.1% |

Week 4 | 18 | 84 | 21.4% |

Total | 65 | 314 | 20.7% |

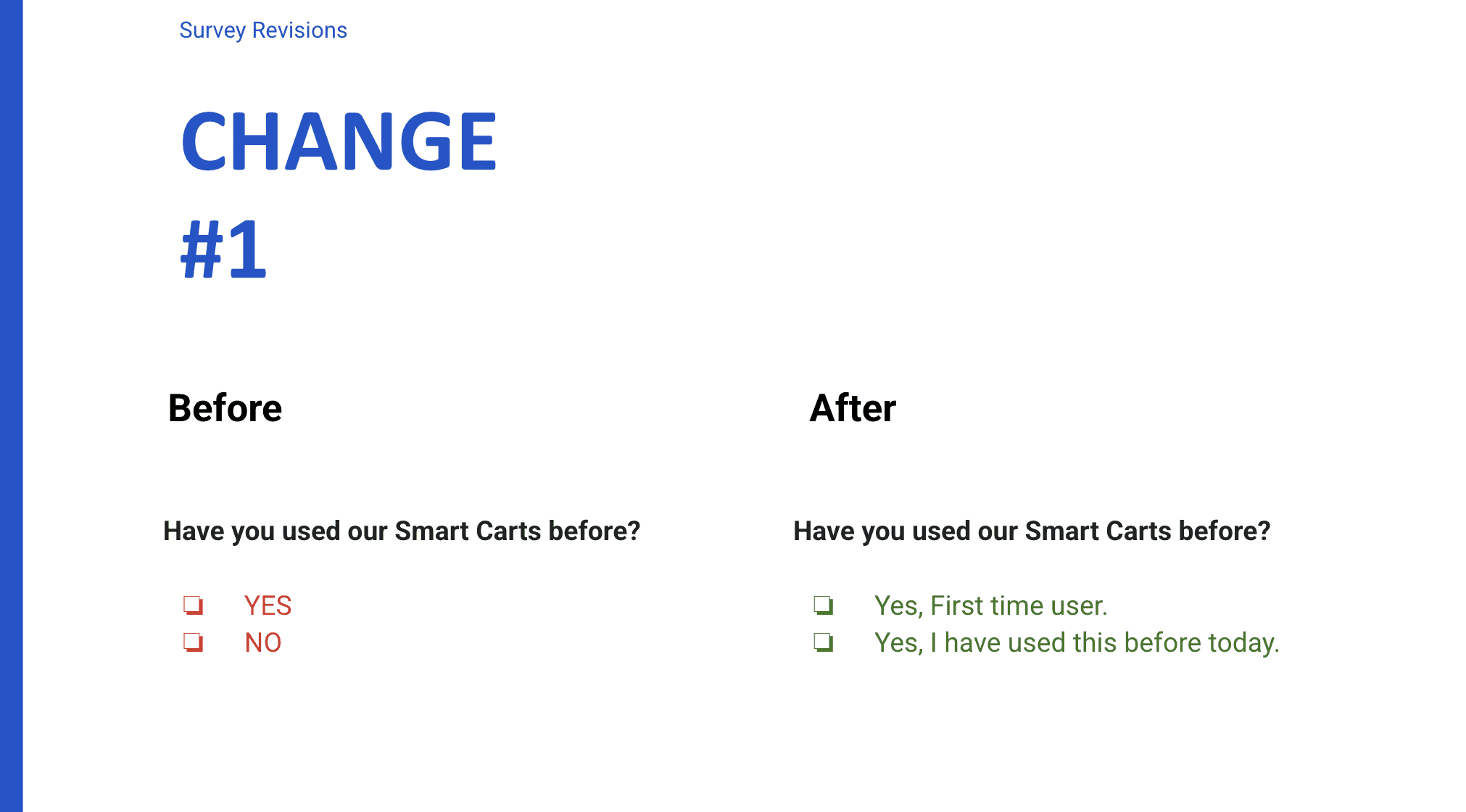

4. Survey Instrument Revision

Identified positive-response bias in the original question framing and restructured prompts to more accurately capture friction and dissatisfaction. After reviewing the draft questions, I flagged patterns likely to introduce bias and reduce diagnostic value. These changes ensured that structured feedback confirmed issues found in observation and interviews, while also providing segmented, quantitative evidence critical for commercial decision-making.

Phase 2: Research Instrument Integrity & Bias Correction

After initial pilot observations, the team asked me to gather structured participant feedback using a prewritten set of intercept questions shared via email. While I wasn’t involved in research planning or question development, I reviewed the instrument and flagged patterns likely to introduce response bias and reduce diagnostic value.

Usage history segmentation

Original: “Have you used our Smart Carts before? Yes / No”

Risk: Collapses first-time friction and repeat tolerance into one bucket

Revision: First-time user / Returning user

Benefit: Enabled separation of onboarding friction vs adoption stickiness

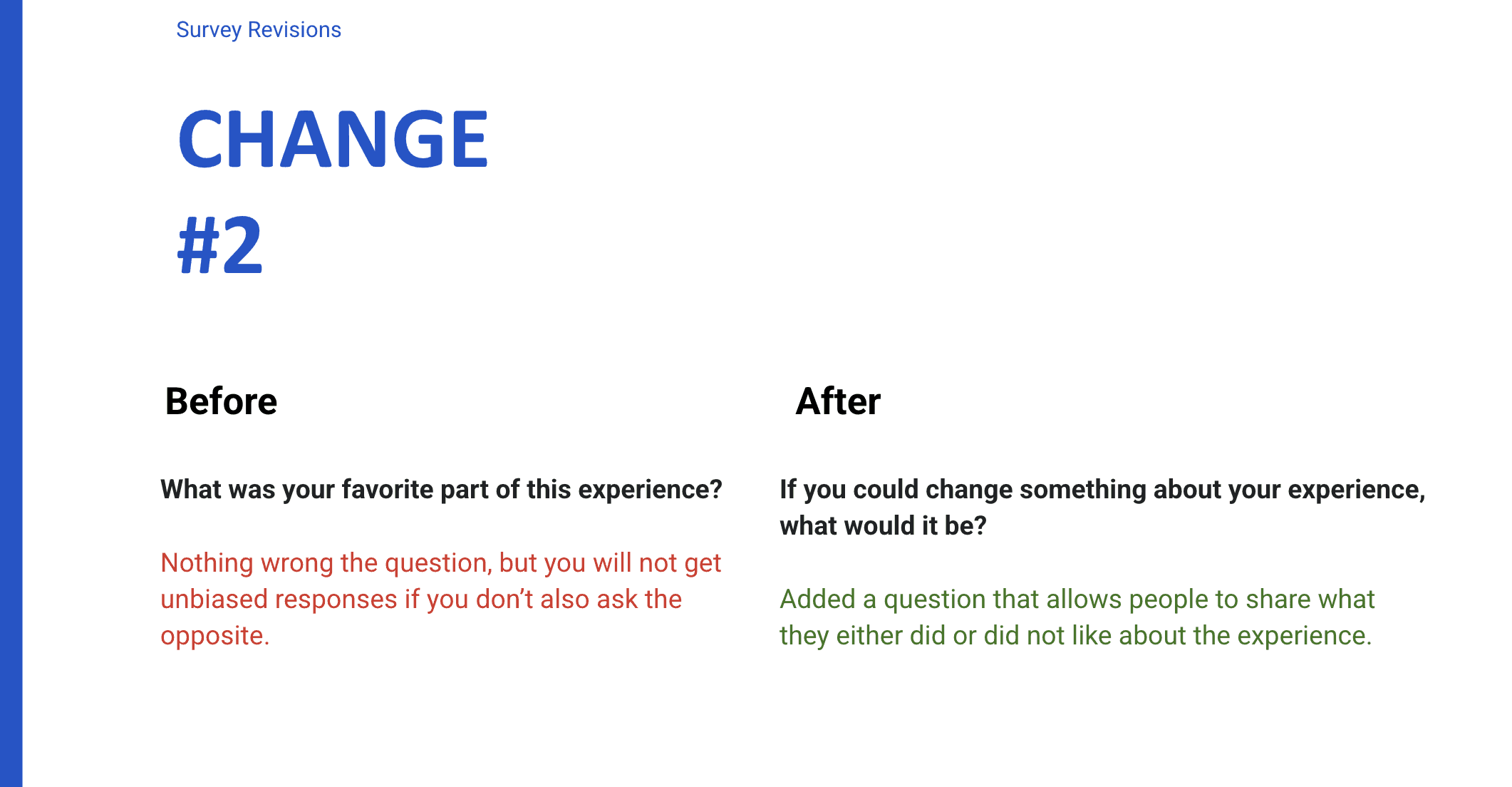

Balanced valence prompts

Original: “What was your favorite part of the experience?”

Risk: Positive-only recall encourages polite praise and suppresses friction signals

Revision: Added a counterpart prompt: “If you could change one thing, what would it be?”

Benefit: Surfaced actionable barriers without losing positives

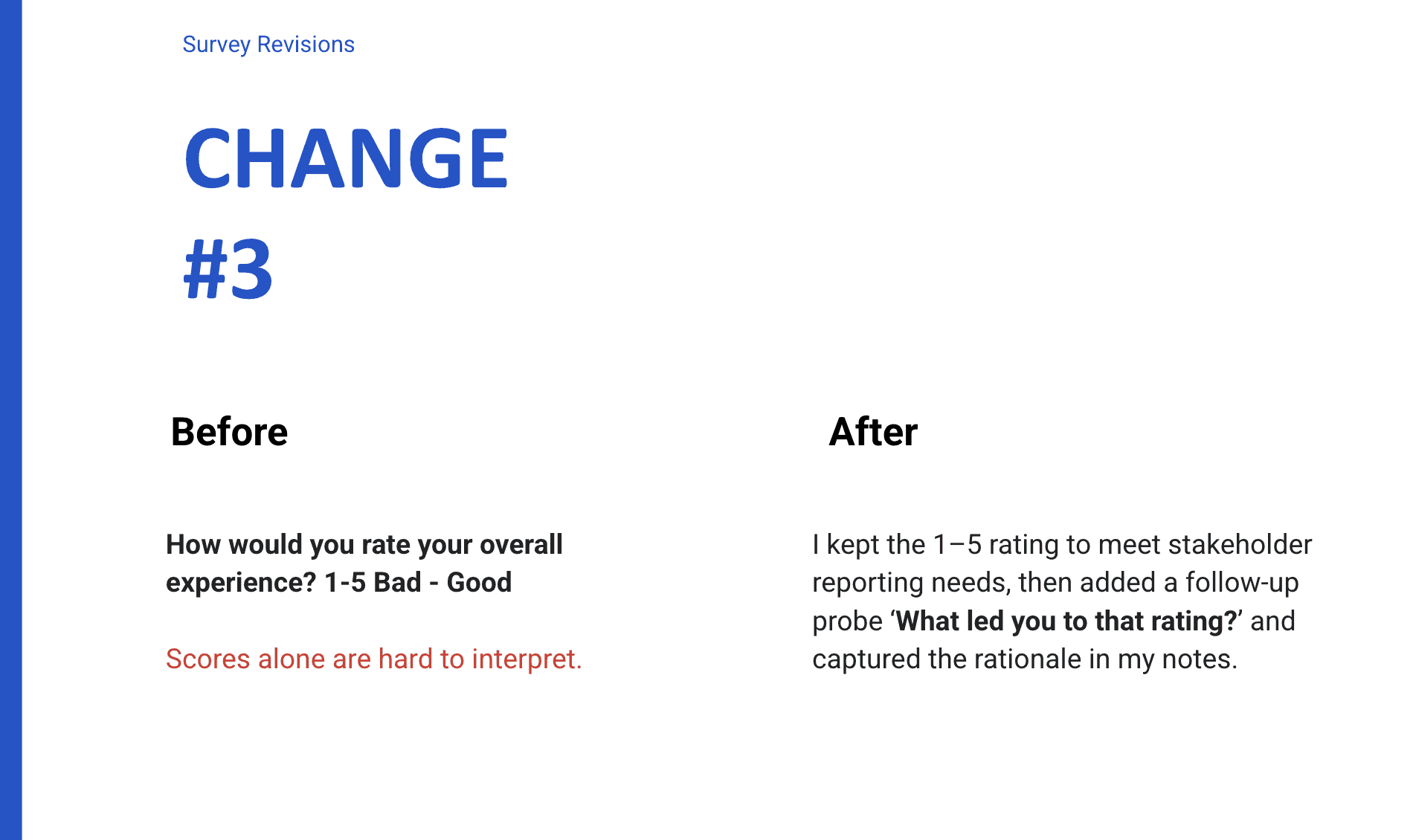

Rating - diagnostic follow-up

Original: “How would you rate your overall experience (1–5)?”

Risk: Scores alone are shallow and prone to social desirability

Revision: Kept the rating for stakeholder needs, added: “What led you to that rating?”

Benefit: Turned sentiment into explainable, actionable themes for UX/Engineering

Result

These changes increased data reliability and actionability by reducing positivity bias, enabling segmentation by user type, and converting numeric ratings into diagnostic insights. The findings revealed layered adoption barriers rather than isolated usability issues.

Insight 1: Trust is a prerequisite for autonomy

Shoppers frequently expressed a need for confidence in the accuracy of total amounts, particularly for produce weight and discounted items. When cart calculations seemed uncertain, the promise of spending control was replaced by concerns about overcharging, reducing willingness to continue.

Trust erosion in shoppers' own words: these direct sentiments show that moments of doubt not only disrupt the experience but also pose a real risk to future revenue.

Unclear discount pricing made it hard to trust the final total.

If the weight/price feels off even once, it’s not worth using again.

Insight 2: Adoption tolerance is contextual

Shoppers often reported low willingness to troubleshoot when rushed, fatigued, or caring for children. In these situations, a single scanning failure typically ended the attempt rather than prompting problem-solving.

Representative shopper sentiment

I didn’t have the energy to figure this out today.

In a hurry, I’ll default to the fastest known path.

Insight 3: Habit identity shapes willingness to adopt

Shoppers often described shopping as a personal routine, including how they bag items, the order in which they shop, and their preferred checkout method. The cart introduced not only a new interface but also required changes to established habits.

Representative shopper sentiment

This doesn’t fit how I shop or like to bag my groceries.

I have a specific flow and prefer to stick with it.

Insight 4: Reliability determines commercial viability

Shoppers often abandoned the concept due to reliability issues that led to repeated exception handling, rather than a dislike of the product itself. In a retail pilot, these disruptions serve as risk signals because negative experiences affect repeat use and word of mouth, even if checkout is ultimately successful.

Representative shopper sentiment

It’s convenient when it works, but one disruption makes it not worth it.

If I need help too often, I’ll go back to normal checkout.

Insight 5: Perceived speed must match the promise

Shoppers described the cart as “fast” only when no issues occurred. Calibration delays, payment confusion, or staff-dependent exceptions, such as age verification, undermined the time-saving promise and sometimes required rework at self-checkout.

Representative shopper sentiment

This isn’t faster if I still have to wait for help or redo steps.

I expected to save time, but exceptions erased the benefit.

Structural Barriers Identified

Engineering Layer

Calibration sensitivity

Produce miscalculations

AmEx metal card failures

No Apple Pay prompting

Sensor blockage from flowers, side bags

Experience Layer

Cart size mismatch for large trips

Confusion around voiding items

Discount visibility issues

Behavioral Layer

Trust erosion

Identity resistance

Fatigue sensitivity

Context-dependent adoption

Recommendations

Prioritize calibration reliability before expansion.

Increase pricing transparency during scan flow.

Simplify error recovery language and reduce punitive system messaging.

Segment deployment strategy by trip type (quick trips vs full hauls).

Align marketing promise with real performance thresholds.

Adoption Risk and Behavioral Insight Framework

1. Calibration / produce miscalculation - “Trust in totals”

Observed: Shoppers repeatedly questioned produce totals when the scale felt inconsistent or slow to calibrate.

Underlying need: Confidence that price/weight is correct before committing to checkout.

Why it matters: Even a single doubt about being overcharged can permanently deter repeat usage.

Owner: Engineering + UX messaging (status/confirmation).

2. “Saving time” mismatch - “Expectation gap”

Observed: The promise of speed didn’t match reality when exceptions occurred (verification, errors, rescans).

Need: Set honest expectations and protect the “time saved” story by reducing exception costs.

Impact: Disappointment increases abandonment and undermines retailer trust in scaling.

Owner: Growth positioning + Ops + product.

3. Camera false positives (flowers/purse/undercarriage) - “Accusation effect”

Observed: Personal items and undercarriage goods repeatedly triggered camera alerts.

Need: Avoid making honest shoppers feel flagged or suspected.

Impact: Emotional trust breaks are more damaging than minor usability friction.

Owner: Engineering + UX tone/messaging.

4. Big items hard to scan - “Recognition + ergonomics”

Observed: Large/bulky items were repeatedly difficult to scan or register smoothly.

Need: Fast, forgiving capture for high-friction item categories.

Impact: Slows the trip, increases errors, and raises abandonment risk for time-pressed shoppers.

Owner: Engineering (recognition) + UX (guidance).

5. Apple Pay not prompted - “Payment discoverability”

Observed: Some shoppers didn’t realize Apple Pay was available or weren’t prompted at the right moment.

Need: Clear, timely payment option prompts.

Impact: Avoidable checkout confusion at the highest-stakes moment.

Owner: UX.

6. Cart size - “Best-fit trip type”

Observed: The cart felt best suited for smaller, convenience trips; larger trips hit capacity/organization constraints.

Need: Align product positioning with the trip it best supports.

Impact: Improves adoption by matching expectations to use case.

Owner: Growth + product strategy.

7. Control spending - “Adoption driver”

Observed: Real-time total visibility helped shoppers feel in control of budget.

Opportunity: Make budget control a primary value prop (and reinforce with UI).

Owner: Product + Growth.

8. Amex / “American card” - “Payment compatibility risk”

Observed: Certain card types repeatedly failed or created checkout friction.

Need: Reliability across common payment methods.

Impact: A single payment failure negates the whole experience.

Owner: Engineering/payments.

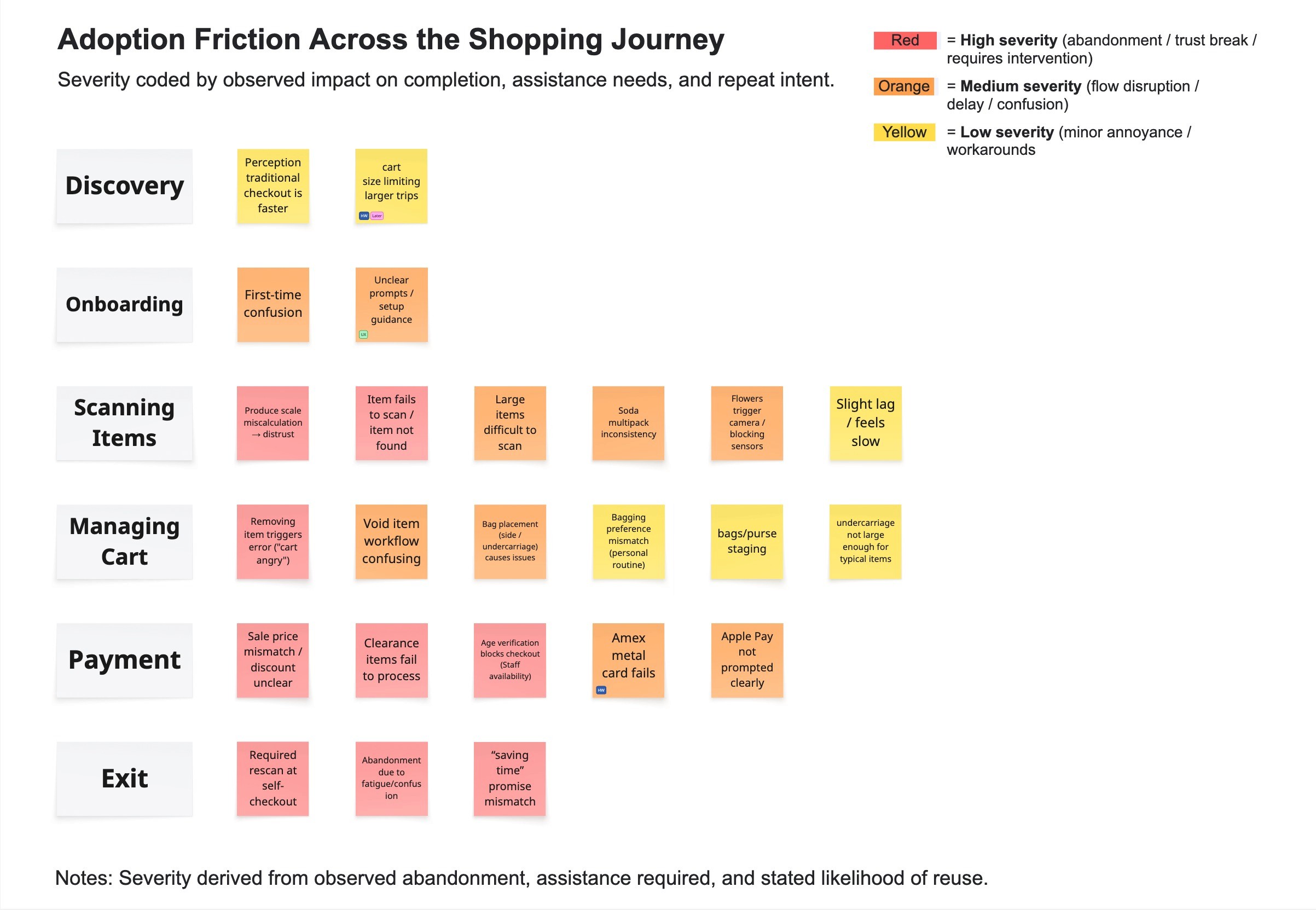

I mapped friction points across the end-to-end journey and graded severity based on impact on completion, required staff assistance, and intent to repeat. Each issue was tagged by fix lever (HW, ENG, UX, OPS, GTM) to distinguish quick wins from those requiring hardware or operational changes.

Tag Definitions

HW = requires physical redesign or new attachment (long lead time)

ENG = software/model/calibration/payments reliability (medium lead time)

UX = UI copy, flows, prompts, guidance (short lead time)

OPS/Policy = staffing, store process, verification rules (org/change mgmt)

GTM/Growth = positioning, expectation-setting, targeting (immediate)

Now / Next / Later (or Quick win / Medium / Long-term)

Theme (Severity) | Issue | Why it matters | Fix lever | Horizon |

|---|---|---|---|---|

P0 Trust breaker | Produce miscalculation/ calibration instability | Fear of overpaying → distrust → abandonment / no repeat | ENG | Next/Later |

P0 Trust breaker | False camera triggers (flowers/purse/undercarriage) | “Feeling accused” breaks trust disproportionately | ENG (+UX tone) | Next |

P0 Trust breaker | Sale/discount accuracy doubts | Price uncertainty undermines the initial value proposition | ENG/OPS | Next |

P1 Flow breaker | Age verification needs associate → rescan | Converts “save time” into a time sink | OPS/Policy | Next |

P1 Flow breaker | Voiding/removing item confusion | Common exception → requires recovery → staff help | UX | Now |

P1 Flow breaker | Apple Pay not prompted/unclear | Checkout confusion at the highest-stakes moment | UX | Now |

P1 Flow breaker | Item not found recovery flow | Breaks scanning momentum; increases fatigue | UX/ENG | Now/Next |

P2 Constraint | No bag/purse staging area | Hands full → slower scanning, fatigue | HW | Later |

P2 Constraint | Cart size limits the trip type | Impacts segment fit; affects positioning | HW + GTM | Later/Now (positioning) |

Edge-case reliability | Amex compatibility | Payment failure negates the entire trip | ENG | Next |

Impact

Established a severity-based diagnostic framework for a live retail pilot, aligning UX, Engineering, and Retail Ops on what to fix first.

Identified recurring trust-breakers (price/weight confidence, false camera triggers, payment reliability) that disproportionately affected completion and repeat intent.

Improved the integrity of structured intercept research by removing leading bias, adding first-time vs returning segmentation, and capturing qualitative rationale behind ratings.

Created journey-level friction mapping and an ownership triage (ENG/UX/OPS/HW/GTM) to separate quick wins from constraints requiring hardware or policy changes.